Involuntary advances have been a problem on multiple social media platforms since the beginning, but when you consider LinkedIn, which is a platform for professionals and focuses on careers, it seems more out of place. Even then, a lot of people, especially women, experience harassment on the platform.

To take a stance against this, LinkedIn has outlined a new process to detect and hide inappropriate InMail messages on its platform. The new detection system has been trained on past examples of harassment records via LinkedIn message.

Some of the most common harassing messages include:

- Romance Scams: Financial scams carried out through fake or hacked accounts by using messaging to illegally obtain money from someone by deceiving a member.

- Inappropriate Advances: Linkedin is a platform for professionals and career opportunities, yet some members choose to inappropriately send multiple messages soliciting relationships to members they don’t know.

- Targeted Harassment: This includes bringing disputes from other platforms onto LinkedIn like stalking or trolling members.

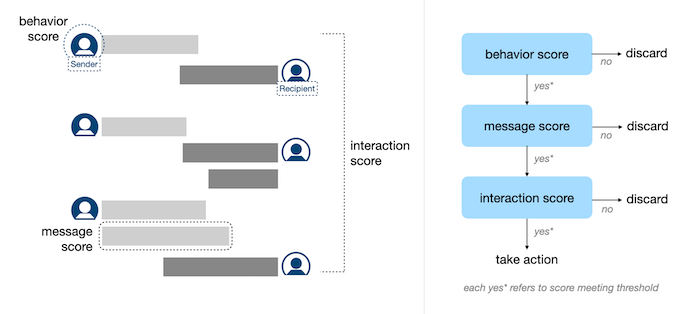

Considering these examples, Linkedin has come up with an algorithm that is capable of detecting harassing messages using a three-step process.

- Sender Behavior such as their usage of LinkedIn, total invitations sent is scored based on a behavior model. This model is trained using the previously confirmed harassment cases via member reports.

- The content of a message will be scored by a message model that is trained using the messages that were reported previously and were confirmed as harassment messages.

- The interaction between two members will also be scored by an interaction model. This will include things such as the frequency of responses to each other, if the message model predicts most messages as harassment, etc. The interaction model is trained by the previous conversation that resulted in harassment.

Once a problem is detected, LinkedIn will start hiding these incoming messages and offer an option to the recipient to read the message or not. They will also be able to submit a harassment report instead.

LinkedIn expects this process will help reduce the instances of harassing behavior on the platform and will make members on LinkedIn feel much safer.

Instagram is introducing its new authenticity measure on their platform, that will require the owner of an account with suspicious behave to provide identification information to confirm if they are a real person or a bot.

Instagram mentioned that they want the content on their platform to come from a real person, not bots, or others who are trying to mislead users. Starting today, they will ask members, who have shown patterns of inauthentic behavior, to confirm who is behind their account.

This will include accounts that have followers in a different country from their location or if they see a sign of automation such as bot accounts as followers, or if there are multiple accounts that are engaged in coordinated inauthentic behavior.

Instagram mentioned that if an account chooses not to confirm their information, their content will receive “reduced redistribution” or it will get disabled.

![[Case Study] EduKart: Shop The Right Course By Carting It](https://www.whizsky.com/wp-content/uploads/2019/02/EduKart-218x150.png)

![[Case Study] How OnePlus Made It To Top In Indian Market](https://www.whizsky.com/wp-content/uploads/2019/02/oneplus-became-premium-brand-in-India-218x150.jpeg)